Safespace AI: Content Moderation Platform

Main Article Content

Abstract

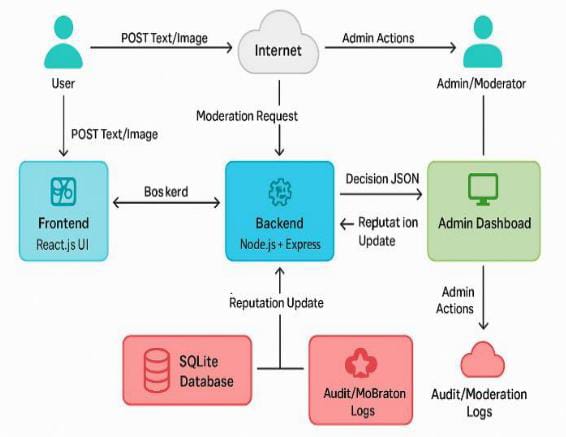

This paper presents Safespace AI – an AI-Based Content Moderation System, a web-based platform designed to automatically detect and filter inappropriate content on online platforms. With the rapid growth of user-generated content on social media and community forums, manual moderation has become inefficient and difficult to scale. The proposed system integrates machine learning, natural language processing (NLP), and rule-based filtering techniques to analyze user-generated text and images in real time. The system architecture includes a React-based frontend, a Node.js backend with Express APIs, and AI services implemented using Python-based models. Content submitted by users is processed through multiple moderation layers including lexicon-based detection, pattern recognition, and machine learning classification to determine whether the content should be approved, flagged, or blocked. Additionally, the platform maintains moderation logs and user reputation scores to ensure accountability and improve decision accuracy. The system aims to reduce harmful content, enhance platform safety, and support administrators in maintaining healthy online communities.

Article Details

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.