Explainable Artificial Intelligence Frameworks for Interpretable Decision-Making in High-Stakes Systems

Main Article Content

Abstract

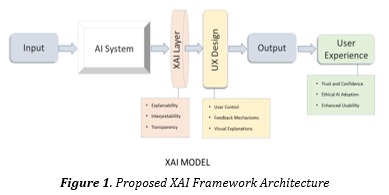

Explainable Artificial Intelligence (XAI) has become an essential research domain for improving transparency, interpretability, accountability, and trust in modern intelligent systems. As Artificial Intelligence technologies are increasingly deployed in high-stakes sectors such as healthcare, finance, autonomous transportation, cybersecurity, industrial automation, and judicial decision-making, concerns regarding the opaque nature of deep learning and black-box models have intensified. Although conventional AI systems often achieve high predictive accuracy, they frequently fail to provide understandable explanations for their decisions, limiting user confidence and regulatory acceptance. This lack of interpretability creates serious challenges related to safety validation, ethical compliance, fairness assessment, and bias detection. To overcome these limitations, XAI frameworks integrate interpretable machine learning techniques, feature attribution methods, visualization tools, rule-based reasoning, and human-centered explanation strategies to support transparent and reliable decision-making. This study presents a comprehensive IMRAD-based analysis of XAI frameworks for interpretable decision-making in high-stakes systems. The proposed framework combines deep learning architectures, attention mechanisms, SHAP-based interpretability, LIME explanations, counterfactual reasoning, and reinforcement learning-driven adaptive explanation modules to enhance transparency and decision reliability. Comparative evaluation indicates that XAI-enabled systems significantly improve fairness, accountability, and user trust while preserving strong predictive performance. The study also highlights emerging challenges including scalability, explanation consistency, adversarial explainability attacks, and effective human–AI collaboration, emphasizing that explainability must become a fundamental architectural component of future trustworthy AI systems.