Image Authenticity Detection: A Dual-Domain Approach Using Vision Transformers with DCT-Based Frequency Analysis and Explainable AI

Main Article Content

Abstract

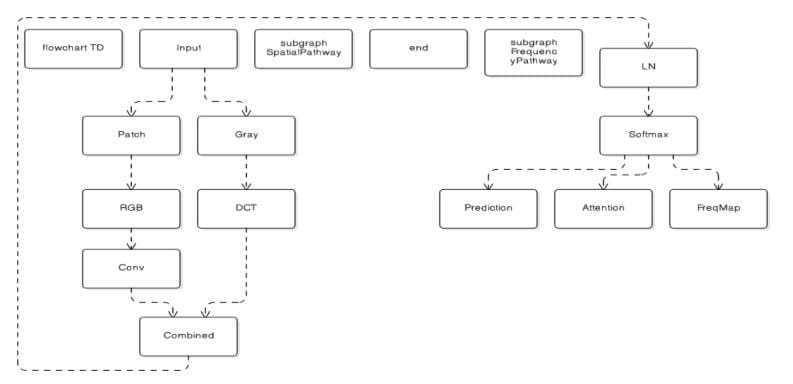

The fast growth of AI-generated images via Generative Adversarial Networks (GANs) and diffusion models has posed unprecedented challenges to digital media authentication. This paper introduces a new deep learning system that combines Vision Transformers (ViT) with Discrete Cosine Transform (DCT) frequency domain analysis for manipulated and AI-generated image detection. Our dual-domain method overcomes key shortcomings of existing spatial-only detection approaches by jointly analysing pixel-level inconsistencies and latent frequency-domain artifacts characteristic of generative models. We embed explainable AI mechanisms via attention visualization and frequency activation mapping to offer transparent, interpretable predictions vital for forensic purposes. Thorough assessment on the Digital Image Forensics Dataset with 30,000 images shows improved performance with 95.43% accuracy, 95.78% precision, 95.01% recall, and 95.39% F1-score. The explainability component increases trust in automated detection systems by highlighting manipulated regions and frequency irregularities. Our findings confirm the significance of multi-domain feature fusion and interpretability in the construction of reliable image authentication systems.

Article Details

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.