Reinforcement Learning-Driven Autonomous Navigation for Mobile Robots in Unstructured and Dynamic Environments

Main Article Content

Abstract

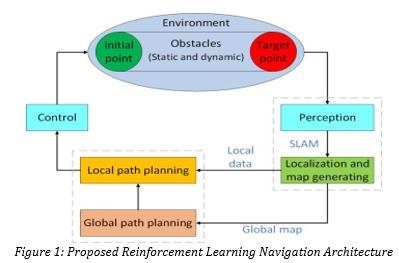

Autonomous navigation in unstructured and dynamic environments remains one of the most challenging problems in mobile robotics due to uncertainty, environmental variability, and real-time decision-making requirements. Traditional rule-based and model-driven navigation systems often struggle to adapt to changing conditions and unforeseen obstacles. This research proposes a reinforcement learning-driven autonomous navigation framework for mobile robots operating in complex environments. The framework integrates deep reinforcement learning with sensor-based perception and adaptive path planning to enable intelligent navigation and obstacle avoidance. The proposed system utilizes reinforcement learning agents that learn optimal navigation policies through continuous interaction with the environment. State representations are generated using sensory inputs such as LiDAR, cameras, and proximity sensors, while reward mechanisms guide the robot toward efficient and collision-free navigation. Experimental evaluation demonstrates that reinforcement learning-based navigation significantly improves adaptability and decision-making performance compared to conventional navigation approaches Furthermore, the framework incorporates dynamic environment modeling, exploration–exploitation balancing, and policy optimization techniques to improve robustness and convergence stability. Results indicate that the proposed model achieves superior navigation accuracy, obstacle avoidance capability, and path efficiency in complex environments. This research contributes a scalable and intelligent navigation framework for next-generation autonomous robotic systems.