CardioLung: AI-Driven Heart and Lung Analysis

Main Article Content

Abstract

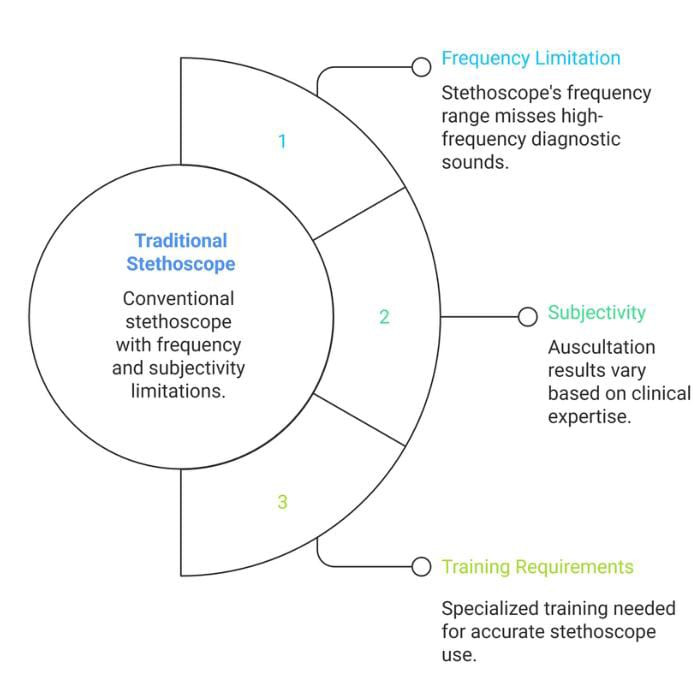

Early detection of cardiovascular and pulmonary diseases is essential for improving patient outcomes, but traditional methods like auscultation are often subjective and require significant resources. While deep learning has shown potential in analyzing audio, it usually lacks a comprehensive, patient-focused interpretation.

In this paper, we introduce a new hybrid AI system that combines three different data sources: chest auscultation audio, analysis by specialized deep learning models, and symptoms reported by the user. Our approach starts by converting standard 5-second audio clips into 2D Mel spectrograms. These spectrograms are then analyzed by a 'Committee of Experts'—two separate Convolutional Neural Networks (CNNs) specialized in heart and lung sounds. We tested our method using a custom CNN and a pre-trained EfficientNetV2-B0 model with transfer learning. The EfficientNetV2-B0 model performed better, achieving 92.15% accuracy for heart sounds and 90.5% for lung sounds.

The unique final step in our system uses an LLM-based synthesizer to combine the technical results from both specialists with the user's described symptoms. In a qualitative study with 15 participants, 93% found the report generated by the LLM much clearer and more useful than the raw technical data. This hybrid, multi-modal system offers a reliable, accurate, and easy-to-use framework for e-Health screening.

Article Details

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.