A Comprehensive Review of Hardware Efficiency of CNN Architecture Design using Decoder-Based Low Power Approximate Multiplier and Error Reduced Carry Prediction Approximate Adder for MNIST Dataset Classification

Main Article Content

Abstract

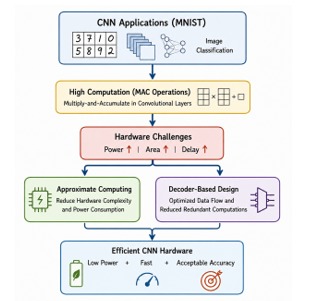

Convolutional Neural Networks (CNNs) have become the backbone of modern image classification systems, particularly for benchmark datasets such as MNIST. However, CNN architectures are computationally intensive due to extensive multiply-and-accumulate (MAC) operations, leading to high power consumption and hardware complexity. Recent research has focused on approximate computing techniques, including approximate multipliers and adders, to improve hardware efficiency while maintaining acceptable accuracy. Approximate multipliers reduce hardware complexity by trading computational precision for lower power and area requirements. Studies show that such designs can achieve up to 18% area reduction and improved energy efficiency while maintaining acceptable accuracy levels in signal and image processing tasks. Similarly, approximate adders such as error-reduced carry prediction adders reduce propagation delay and power consumption, making them suitable for CNN accelerators. In CNN architectures, arithmetic operations dominate hardware utilization, particularly multipliers used in convolution layers. Research indicates that replacing exact multipliers with approximate versions can significantly reduce energy consumption without substantial degradation in classification accuracy. Additionally, decoder-based architectures further optimize computation by reducing redundant operations. This review presents a comprehensive analysis of hardware-efficient CNN designs using approximate multipliers and adders, focusing on MNIST classification. It highlights architectural innovations, comparative performance, and future research directions in energy-efficient deep learning hardware systems.