Multimodal Deep Learning Architectures for Integrated Analysis of Text, Image, and Sensor Data in Intelligent Systems

Main Article Content

Abstract

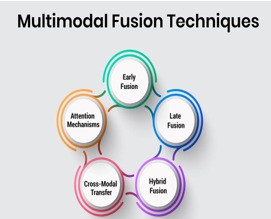

The rapid growth of intelligent systems, Internet of Things (IoT) infrastructures, autonomous platforms, healthcare monitoring systems, and smart cyber-physical environments has generated massive volumes of heterogeneous multimodal data, including text, image, audio, video, and sensor streams. Traditional unimodal analytical approaches often fail to capture complex relationships and contextual dependencies across diverse data modalities, limiting the effectiveness of intelligent decision-making systems. Multimodal deep learning has therefore emerged as a powerful computational paradigm capable of integrating heterogeneous data sources for enhanced representation learning, contextual understanding, and intelligent analytics. This research proposes a multimodal deep learning architecture for integrated analysis of text, image, and sensor data in intelligent systems. The proposed framework combines transformer-based natural language processing, convolutional neural networks for visual feature extraction, and recurrent/temporal deep learning mechanisms for sensor stream analytics within a unified multimodal fusion architecture. The framework integrates feature extraction, latent representation learning, cross-modal attention mechanisms, and multimodal fusion strategies to support adaptive intelligent analytics and real-time decision-making. The proposed architecture enables semantic understanding of textual information, visual perception from image data, and temporal analysis of sensor streams simultaneously. Experimental evaluation demonstrates that the proposed multimodal framework significantly improves analytical accuracy, contextual reasoning, robustness, and predictive performance compared to conventional unimodal systems. Furthermore, cross-modal representation learning enhances the system’s capability to capture complementary information across heterogeneous modalities while improving adaptability in complex intelligent environments.

Multimodal Deep Learning, Intelligent Systems, Text Analytics, Image Processing, Sensor Data Fusion, Cross-Modal Learning.