Multi-Agent Systems for Dynamic Resource Scheduling in Cloud and Edge Data Centers

Main Article Content

Abstract

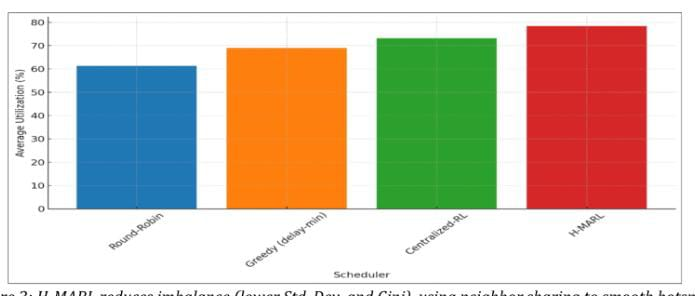

The rapid proliferation of latency-sensitive and compute-intensive applications across IoT, 5G, and smart-city infrastructures has intensified the need for intelligent and scalable task scheduling in distributed cloud–edge computing environments. Traditional centralized schedulers and static heuristics struggle to cope with highly dynamic workloads, heterogeneous resource profiles, user mobility, and fluctuating network conditions. This paper introduces a Hybrid Multi-Agent Reinforcement Learning (H-MARL) framework for autonomous resource scheduling, where each edge node operates as an intelligent agent making independent decisions while periodically synchronizing with a lightweight cloud-based coordinator. The proposed model combines localized decision-making with global policy refinement, enabling adaptive task execution, cooperative peer offloading, and selective cloud escalation. Experimental evaluations using CloudSim-Plus, EdgeSim, and real-world workload traces demonstrate significant improvements over heuristic and centralized reinforcement learning baselines. The H-MARL architecture achieves lower latency, reduced SLA violations, improved throughput, and more balanced resource utilization while minimizing unnecessary migrations and cloud reliance. Results validate that hybrid multi-agent intelligence enhances scalability, reliability, and responsiveness, making the approach well-suited for next-generation distributed systems supporting real-time and mission-critical edge workloads.